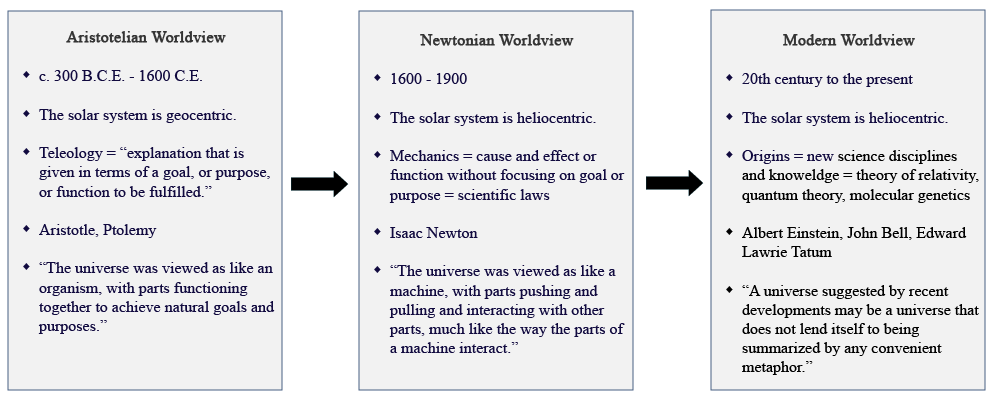

| LEARNING OBJECTIVES 1. Explain the factors that gave rise to science in the Western tradition and identify the characteristics that defined the Aristotelian worldview. 2. Explain what gave rise to the Newtonian worldview and how it changed the course of science in the Western tradition. 3. Identify the thresholds of complexity that emerged and how they laid the foundations for the arrival humans. View Timeline: First 10 Billion Years |

IN THE BEGINNING

How did it all start? This is the question humanity has asked since the dawn of time, and it is a question that still lingers in the quiet corners of our minds today. Across history, people have sought answers through a tapestry of stories—myths, legends, and scientific theories—each striving to explain the creation of our solar system and the emergence of life on Earth. These stories are as diverse as the cultures that shaped them, reflecting the fears, hopes, and imaginations of those who told them. Yet, when we compare their content, we often find recurring themes—universal threads that transcend time and place, weaving together a shared human longing to understand our beginnings.These include, but are not limited to:

- The superiority of the storyteller’s people.

- Omitting the role of women or the condemnation of women.

- The justification of human exploitation of nature.

- The contrast between dark and the generation of light (creation).

- Humans are made from earthly material matter such as clay.

The one thing all origin stories, past and present, seek to explain is the creation of the world and the unfolding of its history. In this course, we’ll ask the same timeless question: How did we come to be the way we are today? But rather than turning to the divine as the author of our story, we will turn to modern science as our storyteller. Why science? As the historian David Christian explains, “As much as possible, modern science bases its claims on evidence rather than authority. And, as in a court of law, science is always open to new forms of evidence, even if these require revisions to standard claims about reality.” With this approach, our modern origin story will begin by exploring the “thresholds of complexity,” a series of pivotal moments identified by Christian and other historians who follow the Big History framework. Together, these thresholds serve as milestones in the grand story of how it all began.

THE RISE OF SCIENCE IN THE WESTERN TRADITION

Before we begin exploring what science can tell us about our origins, we must first understand how science itself came to be. After all, it is our storyteller. Nearly all ancient civilizations practiced forms of science, from the Egyptians’ mastery of engineering to the Babylonians’ detailed astronomical records, and the mathematical insights of the Indo-Pakistan subcontinent. But in the Western tradition, science’s first true birth came with the ancient Greeks. For reasons still debated by historians, the Greeks were uniquely preoccupied with the potential of human achievement, unbound by the will of gods.

This emphasis on human greatness is reflected in Homer’s (c. 750 B.C.E.) epics, The Iliad and The Odyssey, where heroes lived for areté—the pursuit of excellence. For the Greeks, areté, combined with nike (victory), led to kleos (fame) and time (honor)—the ultimate measures of a meaningful life. These cultural values placed extraordinary importance on mastery, competition, and the quest for greatness, shaping not only their art and warfare but also their intellectual pursuits. Driven by the same passion that inspired their heroes, the Greeks began to turn their minds toward understanding the natural world, not through divine revelation, but through reason, observation, and debate. In doing so, they laid the groundwork for the scientific tradition that would later define the Western world.

From the Homeric world that celebrated human potential and achievement emerged a revolutionary worldview that fused together two fundamental ideas. The first was the systematic use of reason to deepen humanity’s understanding of reality. The second was the belief that the world could be explained through natural principles rather than divine intervention. This new way of thinking was rooted in observation and classification, a methodical approach to uncovering the workings of the natural world.

The first pioneers of this intellectual shift turned their gaze from myth to nature, seeking to uncover its underlying truths. Thales of Miletus (c. 624–546 B.C.E.), for example, proposed that water was the material source of all things, a bold departure from mythological creation stories. Similarly, Pythagoras of Samos (c. 571–496 B.C.E.) founded a community in Croton that devoted itself to mathematics, believing that numbers and their relationships were the foundation of all existence. These early thinkers laid the groundwork for a new way of understanding the universe—one guided by reason, evidence, and the search for natural explanations.

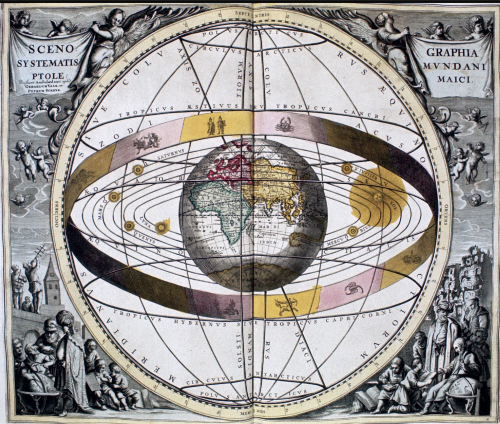

The Greeks also sought to unravel the mysteries of the heavens, striving to understand the motions of the stars and planets. One of the most influential figures in this pursuit was the philosopher Aristotle (384–322 B.C.E.), who proposed a geocentric model of the cosmos. He argued that Earth was the center of the solar system, that celestial motions were circular, and that the heavens were eternal and incorruptible. Aristotle’s ideas served as the foundation for later refinements by Hipparchus of Nicea (c. 190–127 B.C.E.), who applied mathematical rigor to chart the heavens with remarkable precision.

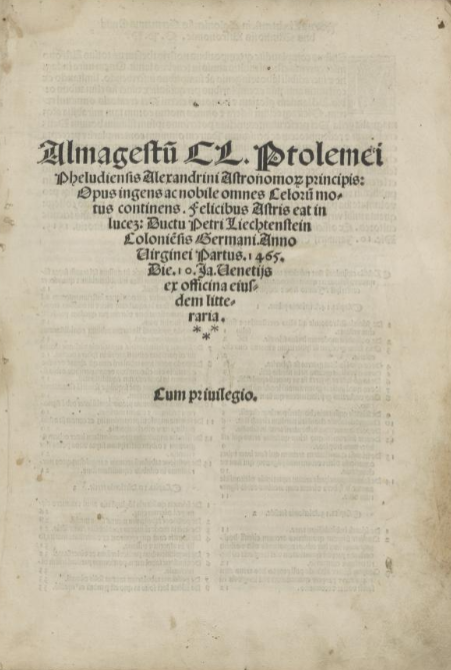

These early models of the cosmos were synthesized and expanded upon by the Roman-Egyptian astronomer Claudius Ptolemaeus (c. 100–175 C.E.), better known as Ptolemy. In his groundbreaking work Almagest (Megalé Syntaxis), Ptolemy compiled and refined centuries of astronomical knowledge into a systematic cosmography. His geocentric system, which combined observation and mathematical calculation, would dominate Western thought for over a millennium. Though these early ideas were later supplanted by heliocentric models, they stand as a testament to the Greeks’ relentless pursuit of knowledge and their desire to bring order to the celestial realm.

Ptolemy’s Almagest became a foundational text for medieval astronomers, serving as the definitive guide to the cosmos for centuries. Its geocentric model aligned with Christian theology, which added the belief that God had created the heavens and the Earth just 6,000 years ago. By the Middle Ages, cosmography in Europe was firmly dominated by an Earth-centered universe, a worldview that reinforced humanity’s central place in creation and was upheld as divine truth. This synthesis of Greek scientific thought and Christian doctrine shaped European understanding of the cosmos for over a millennium, influencing scholars, theologians, and astronomers alike. In this worldview, the heavens were not just celestial spheres but a reflection of God’s perfect design, immutable and eternal.

THE CONTRIBUTIONS OF THE ISLAMIC WORLD

The second birth of science in the Western tradition came in the 11th century, as Europe began to recover from centuries of economic and cultural collapse. This revival, however, owes much of its conception to the Islamic world, and particularly to the Abbasid Caliphate (750–1258 C.E.), whose leadership spearheaded a remarkable translation movement. The Abbasids sought to preserve and expand upon the scientific legacy of ancient Greece, Persia, India, and beyond, creating a vast repository of intellectual treasures. These texts, enriched with new insights by Islamic scholars, flowed into Europe through cultural exchanges in Spain, inspiring a new generation of natural philosophers. By 1200, for example, most of Aristotle’s works—once lost to the West—had been translated from Arabic into Latin and were finding their way into European universities, reshaping medieval philosophy and scientific inquiry.

Yet, the Islamic world’s contributions extended beyond philosophy. Among the most transformative was the introduction of the Hindu-Arabic numeral system, a revolutionary mathematical tool. This decimal-based system, with its nine digits (1–9) and the concept of zero, was far more efficient than Roman numerals and would become the universal language of science. It enabled more complex calculations, laying the foundation for advancements in fields as diverse as astronomy, engineering, and commerce. Together, these contributions sparked a scientific and intellectual awakening in Europe, bridging the ancient and modern worlds.

The Hindu-Arabic numeral system was a radical improvement over the Roman numeral system, which lacked both place value and the concept of zero. These limitations made Roman numerals cumbersome and impractical for complex calculations, especially as Europe’s economy expanded. The adoption of the Hindu-Arabic numeral system by European merchants addressed the growing economic complexity brought about by the commercial revolution of the Middle Ages. With the rise of international trade, banking entities, and financial networks, new methods of computation were essential to keep pace with the demands of commerce.

The historian Raffaele Danna explains that the use of the Hindu-Arabic numeral system “transformed economic practices, together with several other fields, such as visual arts, architecture, shipbuilding, surveying and engineering.” He also emphasizes that “the story of the adoption of Hindu-Arabic numerals allows us to appreciate that the scientific revolution was also indebted to more than three centuries of mathematical experimentation carried out by European practitioners.” By providing a flexible and efficient mathematical language, the Hindu-Arabic numeral system not only revolutionized trade and finance but also laid the groundwork for scientific advancements. It gave science a universal tool—one that would transform humanity’s ability to measure, calculate, and ultimately redefine the world.

A NEW WORLDVIEW

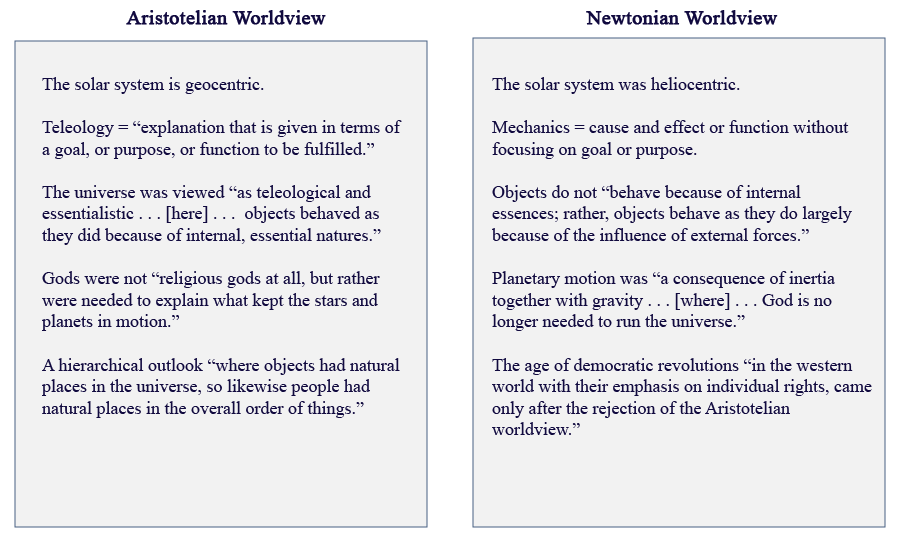

From the death of Aristotle to the 1600s, the Western world was shaped by an Aristotelian worldview that dominated thought for nearly two millennia. As the philosopher Richard DeWitt explains, this perspective was built on “the belief that the Earth was the center of the universe, that objects had essential natures and natural tendencies, that the sublunar region was a place of imperfection and the superlunar region a place of perfection.” These ideas formed the bedrock of classical and medieval cosmography, creating a coherent vision of the cosmos that was widely accepted across the Western world.

By the 1600s, however, cracks were beginning to appear in this intellectual edifice. New evidence—drawn from revolutionary tools like the telescope and inspired by the natural philosophers of the Middle Ages—challenged the Aristotelian model and set the stage for a new cosmography. This shift culminated in the Newtonian worldview, a radical reimagining of the universe driven by observation, experimentation, and mathematics. What changed, and what generated this seismic shift in understanding?

The answer lies in the mid-16th century, when the classical worldview began to crumble under the weight of discoveries that revealed the cosmos in unprecedented ways. Building on a foundation laid by medieval thinkers, a new generation of scholars questioned long-held assumptions, sparking what we now call the Scientific Revolution—the third birth of science in Europe. This intellectual upheaval did not merely refine old ideas; it replaced them with a wholly new way of understanding the natural world, reshaping human thought and paving the way for modern science. For example:

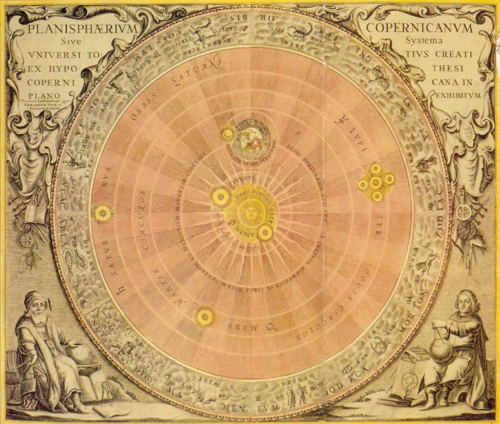

- The shattering of the Aristotelian system with the introduction of the heliocentric model.

- The importance placed on experimentation and measurement for arriving at cosmographic knowledge.

- The mathematization of science through the adoption of Hindu-Arabic numerals

The Scientific Revolution provided a template for investigating the natural world referred to as the scientific method which implemented the following guidelines:

- The collection of data (observing) by precise measurements and controlled repeatable experiments.

The formulation of a testable hypothesis (proposing explanations) to explain regularities or anomalies in the data. - The verification or falsification (testing explanations) of those hypotheses by comparing their predictions with the results of new measurements or experiments.

- The adoption of these new means and methods stimulated the development of some of the major branches of the sciences (chemistry, biology, and electromagnetic theory for example). These developments in turn would facilitate a shift in science, a shift from cosmography (mechanics of the heavens) to cosmology (origins of the heavens).

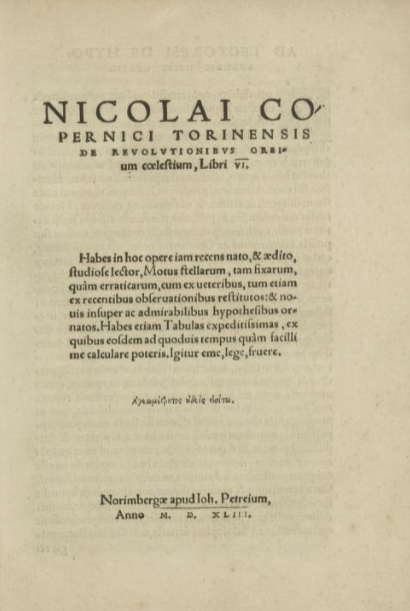

Nicolas Copernicus (1473–1543), a Polish astronomer, was the first to turn geocentric cosmography on its head. In his groundbreaking work De revolutionibus orbium coelestium, Copernicus proposed a heliocentric model of the universe, arguing that the planets—including Earth—revolve around a stationary sun. This radical idea challenged the long-standing Ptolemaic system and the theological notion that Earth occupied the center of God’s creation. Copernicus’s theory was the spark that lit the fuse of a cosmic revolution.

Building on this upheaval, the Danish astronomer Tycho Brahe (1546–1601) made significant contributions by charting the stars with unparalleled precision. Known as the greatest pre-telescopic astronomer, Brahe compiled detailed astronomical tables that served as an essential resource for future discoveries. Although he rejected Copernicus’s heliocentric model, Brahe devised the Tychonic System, a hybrid that combined features of both the Ptolemaic and Copernican frameworks. His meticulous observations provided the foundation for his assistant, Johannes Kepler (1571–1630), to take the next monumental step in understanding the cosmos.

Kepler, drawing on Brahe’s data and his own extended observations of Mars, revolutionized astronomy by uncovering the true nature of planetary motion. In 1609, he published his first law of planetary motion, which revealed that planets move in ellipses, not the perfect circles imagined by ancient and medieval thinkers. This shattered the centuries-old belief in celestial perfection and provided a more accurate understanding of the mechanics of the solar system. Together, the work of these three astronomers transformed humanity’s view of the universe, ushering in the age of modern science.

When Galileo di Vincenzo Bonaiuti de’ Galilei (1564–1642) first pointed his telescope toward the heavens, he exclaimed, “I give infinite thanks to God who has been pleased to make me the first observer of marvelous things.” With this simple instrument, Galileo opened a new window to the cosmos, making observations that would forever change humanity’s understanding of the universe. What he saw not only deepened the wonder of the night sky but also provided compelling evidence for the validity of the Copernican system.

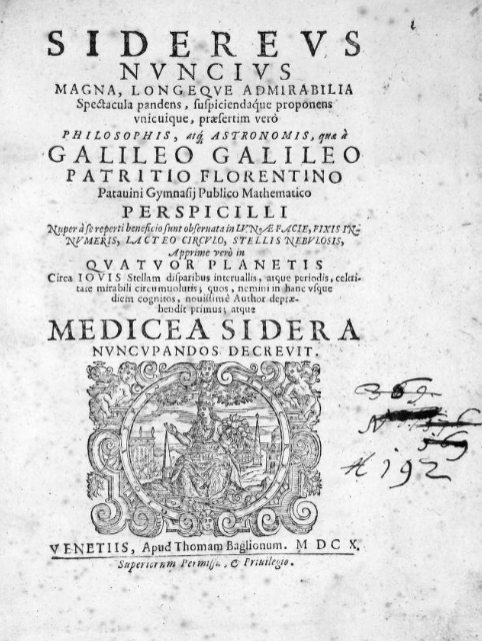

In 1610, Galileo compiled his groundbreaking discoveries in a work entitled Starry Messenger (Sidereus Nuncius). Among his conclusions were revelations that shattered long-held Aristotelian and Ptolemaic beliefs: the moon was not a smooth, perfect sphere but a rugged and cratered body; Jupiter had moons of its own, proving that not all celestial objects orbited Earth; and Venus displayed phases like the moon, consistent with its orbit around the sun. These observations dealt a powerful blow to the geocentric model of the universe and solidified Galileo’s place as one of the most transformative figures of the Scientific Revolution.

Galileo continued to expand on his revolutionary views of the cosmos in subsequent works, further challenging the geocentric model and redefining humanity’s understanding of the heavens. Unsurprisingly, these ideas provoked fierce opposition from the Church, which had deeply embedded the Aristotelian worldview into its theological framework. The geocentric model, with Earth at the center of creation, was seen as not just a scientific truth but a reflection of divine order. Thus, in 1614, Galileo was accused of heresy, his ideas viewed as a dangerous challenge to both scripture and Church authority.

This conflict came to a head in 1632, when Galileo published his Dialogue Concerning the Two Chief World Systems. In this daring and provocative work, Galileo staged a fictional debate between proponents of the Ptolemaic (geocentric) system and the Copernican (heliocentric) system, heavily favoring the latter. The Dialogue was not only a defense of Copernican theory but a direct affront to Church teachings, sparking outrage among religious authorities. As a result, the charge of heresy resurfaced, leading to Galileo’s infamous trial before the Roman Inquisition in 1633—a pivotal moment in the history of science and its uneasy relationship with religious power.

Referred to by many as the culminating figure of the Scientific Revolution, Isaac Newton (1642–1727) unified two centuries of observation, experimentation, and mathematical insight in his magnum opus, Philosophiae Naturalis Principia Mathematica (Mathematical Principles of Natural Philosophy), published in 1687. In this groundbreaking work, Newton revealed the universal force of gravity, a law that governs the motion of all objects, from falling apples on Earth to the orbits of planets in the heavens. This discovery shattered the long-standing divide between terrestrial and celestial physics, uniting the cosmos under a single set of natural laws for the first time in human history.

Newton’s Principia not only synthesized the discoveries of earlier scientists like Galileo, Kepler, and Descartes but also provided a mathematical framework that would become the foundation of classical mechanics. His laws of motion and universal gravitation revolutionized cosmography, offering a model of the universe that remains central to modern physics and astronomy. By showing that the same force operating on Earth extended to the farthest reaches of space, Newton redefined humanity’s understanding of the cosmos and cemented his legacy as one of the greatest minds in history.

With the Newtonian worldview displacing the Aristotelian worldview, science generated a series of developments between 1600-1900 that would lay the foundations for new disciplines and scientific achievements. It is these achievements that have not only allowed us to explore human history beyond traditional academic confines, but also place human history within the architecture of cosmology and cosmography. Before we explore the exceptionalism that is to be human, we should first place our origin within the deep past of the cosmos.

THE THRESHOLDS OF COMPLEXITY

With the Newtonian worldview displacing the Aristotelian paradigm, science ushered in a cascade of developments between 1600 and 1900 that laid the foundations for new disciplines and remarkable achievements. The precise, mathematical approach introduced by Newton gave rise to revolutions in physics, biology, astronomy, and geology, reshaping how we understand the natural world and our place within it. These breakthroughs not only allowed us to explore human history beyond traditional academic confines but also wove our story into the grand architecture of the cosmos.

Before we delve into what makes humanity exceptional—our creativity, curiosity, and capacity for reflection—we must first look backward. To understand ourselves fully, we must uncover our origins in the deep past of the universe, placing the human story within the vast history of the cosmos itself. By doing so, a fundamental question arises. Does learning about the immense scale of the universe make human life feel less important—or could it deepen our understanding of who we are and where we come from?

Our look into the deep past begins with Edwin Hubble whose groundbreaking observations revealed that the universe is expanding. By studying the redshift of light from distant galaxies, he discovered that the farther away a galaxy is, the faster it appears to be moving away from us. This remarkable finding suggested that in the distant past, the universe must have been compacted into a single, incredibly dense point. Scientists believe that this point, often referred to as a “singularity,” underwent a rapid and extraordinary expansion—a phenomenon now known as the Big Bang. This expansion unleashed unimaginable energy, creating matter and propelling it outward to form the billions of galaxies that make up the vast universe we see today.

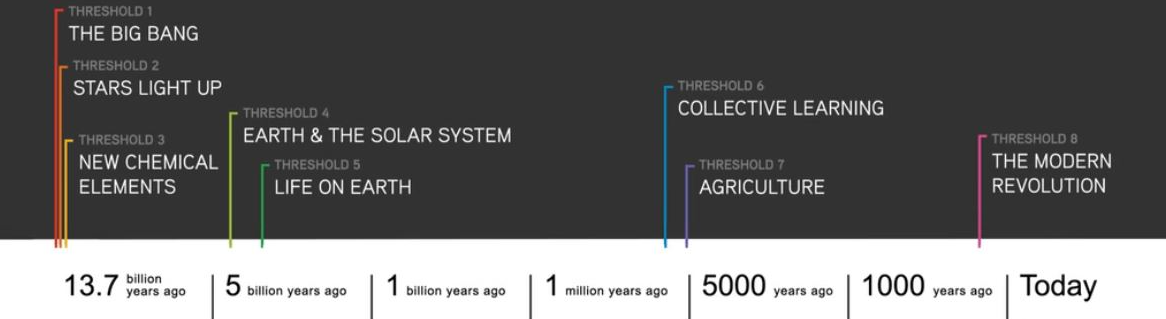

Hubble’s Law, which demonstrated that galaxies are moving away from us at speeds proportional to their distances, was the first major step toward what we now call the Big Bang Theory. This groundbreaking discovery revealed that the universe is expanding, suggesting that it must have originated from a single, dense point in the distant past. This moment marks what David Christian and the framework of Big History identify as the first threshold of complexity: the creation of the universe itself. At this threshold, the cosmos was born in a flash of unimaginable energy, laying the foundation for everything that would follow—galaxies, stars, planets, and eventually life. It was the ultimate beginning, a moment when simplicity gave way to the possibility of complexity, shaping the trajectory of the universe and humanity’s place within it.

About 380,000 years after the universe was born, the second threshold of complexity emerged: the creation of stars. As the young universe cooled, conditions allowed the formation of the first atoms, primarily free-floating hydrogen and helium. These atoms began to coalesce into vast clouds, drawn together by the invisible hand of gravity. Over time, gravity pulled these clouds tighter and tighter, creating immense pressure and heat at their cores. This process ignited nuclear fusion—the combining of hydrogen and helium atoms into heavier elements—and the energy released gave birth to the first stars, brilliant beacons of light in the once-dark universe.

These stars were far more than sources of light; they were engines of creation. Their intense heat and fusion processes created the preconditions for new levels of complexity. Gravity, still at work, began organizing stars into vast galactic structures, while the universe itself continued to expand, spreading these newly formed galaxies across the cosmos.

The third threshold of complexity arrived with the dramatic death of these first stars. When stars collapsed or exploded in supernovae, they forged heavier elements—like carbon, oxygen, and iron—elements that would later form the building blocks of planets, moons, and life itself. These new elements, charted on the periodic table, unlocked an entire universe of possibilities, fueling the creation of increasingly intricate systems. From the ashes of dying stars came the ingredients for everything we know today: Earth, its landscapes, its oceans, and every living being that has ever existed.

The fourth threshold of complexity, the formation of planets, arose as matter collided and clumped together under the influence of the sun’s gravitational pull, a process known as accretion. In our solar system, planets with lighter, gaseous compositions—like Jupiter, Saturn, Uranus, and Neptune—were pushed to the outer edges, while denser, rocky planets—Mercury, Venus, Earth, and Mars—remained closer to the sun. Among these planets, Earth’s distance from the sun, about 93 million miles, and its moderate temperatures made it uniquely suited for the formation and sustenance of life. With its liquid water and stable climate, Earth set the stage for the next great leap in complexity: the origins of life.

Evidence suggests that life on Earth may have begun around 4 billion years ago. But what allowed life to emerge? Until the 20th century, many in the scientific community turned to divine explanations, lacking the tools to investigate life’s origins. By the 1920s, however, scientists like Alexander Oparin and J.B.S. Haldane proposed that chemical evolution could have played a role, with early Earth’s environment fostering the gradual assembly of organic molecules. In 1952, Harold Urey and Stanley Miller tested this idea by recreating a model of Earth’s primordial atmosphere. By zapping the mixture of gases with electrical sparks to simulate lightning, they successfully produced twenty amino acids, the basic building blocks of proteins. While this experiment did not create life itself, it was a significant step toward understanding how life’s essential ingredients might have formed naturally.

Despite promising advances and theories—such as Panspermia, which suggests life’s building blocks came from space, or the Warm Pond hypothesis—science still faces one of its greatest mysteries: how life arose from non-life. The question of abiogenesis remains unanswered, a profound challenge at the very edge of human understanding, reminding us of the complexity and wonder of our origins.

The discovery of archaebacteria has led some scientists to propose that the origins of life on Earth may lie in these extraordinary organisms. Archaebacteria can thrive in extreme environments, withstanding intense heat, extracting energy from chemicals in rocks, and residing deep below the Earth’s surface. This has sparked the intriguing question: could life have first appeared underground, shielded from the harsh conditions of early Earth? If so, this raises another fundamental question: how did archaebacteria themselves come into being?

All organic life relies on a division of labor between two essential processes: metabolism (the chemical activity that powers life) and replication (the genetic code that preserves and propagates it). In most modern organisms, DNA (deoxyribonucleic acid) is the instruction manual for life, encoding the genetic information necessary to build and maintain living systems. Yet, DNA is powerless on its own—it requires metabolism to function. This conundrum has long puzzled scientists: which came first, metabolism or replication?

The discovery of RNA (ribonucleic acid) in the 1960s offered a potential solution to this chicken-and-egg problem. Unlike DNA, RNA is a versatile molecule that can perform both tasks: it can act as a catalyst to drive metabolic reactions and also encode genetic information. This remarkable dual functionality has led many scientists to conclude that RNA may have been the earliest precursor to life on Earth, a vital evolutionary bridge between non-life and life. While much remains unknown, the discovery of RNA has illuminated one of the key steps in understanding the origins of life and continues to fuel exploration into the mystery of how life began.

Life on Earth has been a four-billion-year experiment, shaped by natural selection to produce the immense diversity of organisms we see today. Paleontologists and biologists have made remarkable strides in understanding this experiment, tracing its origins to archaebacteria that may have thrived as early as 4 billion years ago. Some of these early organisms extracted energy by consuming other living beings, while others harnessed sunlight through photosynthesis. From simpler molecules—such as amino acids, nucleic acids, sugars, and proteins—organic matter evolved, eventually giving rise to the building blocks of life as we know it.

The Proterozoic era, beginning about 2.5 billion years ago, marked a major turning point in the history of life. As free oxygen began transforming Earth’s atmosphere, this “oxygen revolution” unleashed the energy necessary for new and more complex life forms to emerge. Among these were the eukaryotes, single-celled organisms with a nucleus, dating to roughly 1.7 billion years ago. Over time, the fusion of single-celled organisms into larger, multicellular organisms revolutionized life on Earth. Multicellularity set the stage for astonishing evolutionary leaps, allowing for greater specialization and complexity in living systems.

The fossil record is rich with evidence of these milestones. Vertebrates made their first appearance 409 million years ago, followed by reptiles 363 million years ago. One of the most spectacular evolutionary stories unfolded during the Triassic period, 250 million years ago, when dinosaurs roamed the Earth and dominated its ecosystems. It was also during this time that the earliest mammals began to emerge, small and unassuming in a world ruled by giants.

But the age of dinosaurs came to a dramatic end during the Cretaceous period, roughly 66 million years ago. A catastrophic asteroid impact plunged Earth into an ecological disaster, as massive dust clouds blocked sunlight, disrupted photosynthesis, and sent temperatures plummeting. The once-dominant dinosaurs were driven to extinction, paving the way for mammals to rise and diversify in the aftermath. This crisis was not just an end—it was also a new beginning, reshaping life on Earth and setting the stage for the evolution of modern species, including humans.

Yet it was in this harsh post-impact environment that mammals not only survived but adapted and flourished. Why? Early mammals had several key traits that gave them an edge in this challenging world. Their small body size made them less reliant on large amounts of food, their warm-bloodedness allowed them to maintain body heat in fluctuating temperatures, and their fur provided insulation. They nourished their young internally, offering better survival rates, and their habit of living in underground burrows shielded them from the worst of the asteroid’s impact. These advantages enabled mammals to endure in an environment that wiped out larger, less adaptable species.

PRIMATES AND THE GREAT LEAP FORWARD

The devastation of the Cretaceous crisis redirected the course of evolution, creating new opportunities for the mammals that survived. Among these survivors, a remarkable group of mammals known as primates began to evolve. Primates developed specialized traits that set them apart from other mammals: limbs with opposable thumbs for grasping, stereoscopic vision for accurately judging distances, and larger brains capable of controlling complex movements and processing detailed sensory information. These adaptations not only allowed primates to thrive in diverse environments but also laid the foundation for the eventual emergence of human ancestors, marking a profound turning point in the story of life on Earth.

The super-family of apes and humans, known as Hominidae, is divided into three families: Hominidae (great apes and humans), Pongidae (chimpanzees, gorillas, and orangutans), and Hylobatidae (lesser apes, such as gibbons). Molecular dating techniques, which analyze genetic differences to estimate evolutionary timelines, indicate that the Hominidae family began to diverge into two sub-families—Gorillinae (gorillas) and Homininae (humans and our closest extinct ancestors)—about 5 to 7 million years ago. Modern humans, along with our immediate ancestors, belong to the Homininae sub-family. This moment of divergence marks the beginning of a remarkable evolutionary journey, one that would eventually lead to the emergence of Homo sapiens. It is this story—our story—that we will now explore.

The story of human evolution begins in Africa, where the earliest members of the Homininae sub-family took their first steps in a long journey that would eventually lead to Homo sapiens. Among the most ancient of these ancestors is Sahelanthropus tchadensis, a species that lived around 7 million years ago and is one of the oldest known members of the Homininae lineage. Discovered in Chad in 2001, Sahelanthropus is notable for its mix of ape-like and human-like traits. Its small brain size—similar to that of a modern chimpanzee—suggests its kinship with earlier primates, but the position of its foramen magnum (the hole where the spinal cord attaches to the skull) indicates that it may have walked upright, an early hallmark of human evolution.

Around 4 million years ago, another key player in this evolutionary story emerged: the Australopithecus genus. Fossils of Australopithecus afarensis, most famously represented by “Lucy,” discovered in Ethiopia in 1974, provide a clearer picture of how early hominins transitioned to bipedalism. Lucy’s pelvis, leg bones, and footprint evidence suggest that she was fully adapted to walking upright, even as her long arms and curved fingers retained adaptations for climbing trees. Australopithecus afarensis, which thrived from about 3.9 to 2.9 million years ago, marks a pivotal step toward the emergence of the genus Homo.

These early members of the Homininae lineage reveal how evolution worked slowly but steadily, shaping creatures that straddled two worlds—one in the trees and one on the ground. The discovery of species like Sahelanthropus and Australopithecus has deepened our understanding of human origins, providing a glimpse into the traits—upright walking, tool use, and social behavior—that would eventually define our species.

As the evolutionary path continued, the Homininae lineage gave rise to the genus Homo, which marked a dramatic shift in the story of human evolution. These early humans were defined by their increasing brain size, the development of more advanced tools, and their growing ability to adapt to and shape their environment. The first member of the Homo genus, Homo habilis, emerged around 2.4 million years ago, heralding a new era in evolutionary history. Nicknamed “Handy Man” for its association with stone tools, Homo habilis is considered one of the first species in the Homo genus. Fossils discovered in East Africa show that Homo habilis had a larger brain (about 600–700 cc) than its Australopithecus predecessors, signaling the start of significant cognitive development. This species is closely associated with Oldowan tools—simple stone flakes and cores used for cutting, scraping, and processing food. These tools allowed early humans to exploit a wider range of resources, such as meat, which became an important part of their diet.

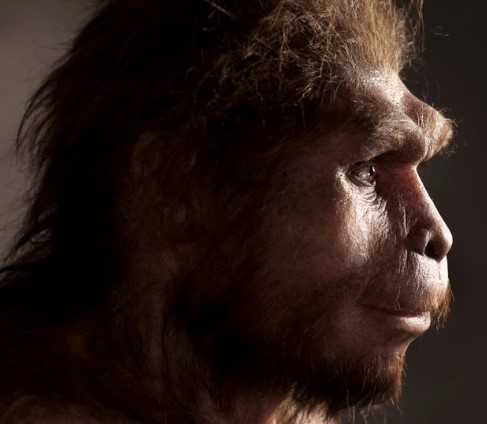

One of the most successful and long-lived species in human history, Homo erectus represents a major leap in the evolution of the genus Homo. Emerging around 1.9 million years ago, Homo erectus spread out of Africa into Asia and Europe, becoming the first human species to migrate across continents. Fossils of Homo erectus have been found in places as far-flung as Java, Indonesia (dubbed “Java Man”) and Dmanisi, Georgia, demonstrating this species’ remarkable adaptability to diverse climates and ecosystems.

With Homo erectus, we begin to see the early stages of human-like culture. The control of fire not only expanded dietary possibilities but also created opportunities for social interaction and cooperation, as groups likely gathered around fire for protection and warmth. The ability to migrate across continents reflects a level of planning, adaptability, and environmental mastery unseen in earlier species. These traits laid the foundation for even more complex social structures and innovations in later human species.

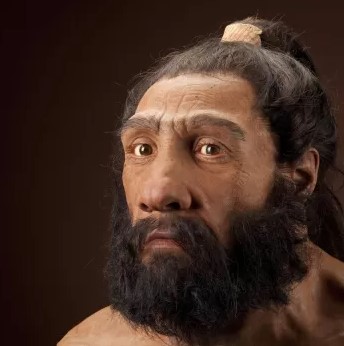

The story of human evolution takes another transformative turn with the emergence of species like Homo heidelbergensis, the ancestor of both Neanderthals (Homo neanderthalensis) and modern humans (Homo sapiens).

Around 700,000 years ago, Homo heidelbergensis began to display increasingly sophisticated behaviors, such as hunting large animals with spears and constructing shelters. These advancements laid the groundwork for the emergence of Homo sapiens, the species that would go on to dominate the planet.

Around 300,000 years ago in Africa, Homo sapiens—our species—emerged as the latest chapter in the story of human evolution. Distinguished by advanced cognitive abilities, complex social structures, and extraordinary adaptability, Homo sapiens would go on to shape the world in ways no other species had before. But the journey of modern humans was not immediate; it unfolded over tens of thousands of years as Homo sapiens faced challenges, competed with other human species, and eventually spread across the globe.

The earliest fossils of Homo sapiens were discovered in Jebel Irhoud, Morocco, dating to about 300,000 years ago. These fossils show a mix of archaic and modern features: a braincase similar in size to modern humans (around 1,300–1,400 cc) but a more elongated skull shape, resembling earlier species. What sets Homo sapiens apart is not just physical features but the emergence of unprecedented cognitive abilities.

This cognitive leap is often referred to as the “Great Leap Forward.” Early Homo sapiens developed sophisticated tools, used fire extensively, and likely engaged in symbolic thought, as evidenced by the first known instances of art and personal adornment. The Blombos Cave in South Africa, dating back 100,000 years, contains engraved ochre and shell beads, providing some of the earliest evidence of abstract thinking and symbolic communication. The emergence of culture also marked a defining characteristic of Homo sapiens. Unlike earlier hominins, Homo sapiens demonstrated a capacity for creativity, collaboration, and innovation that transformed their way of life.

IN CLOSING

In summary, the Chronometric Revolution delves into the evolution of scientific thought in the Western tradition, tracing the shift from the Aristotelian to the Newtonian worldview. It begins with the Aristotelian perspective, rooted in ancient Greek philosophy, which emphasized reason and observation as tools for understanding natural phenomena, attributing events to natural causes rather than divine intervention. This approach eventually gave way to the Newtonian worldview during the Scientific Revolution, which introduced a mechanistic understanding of the universe governed by universal laws, such as motion and gravitation. The course also explores “thresholds of complexity,” pivotal moments in the history of the universe, such as the formation of stars and planets, the emergence of life, and the rise of human consciousness, to illuminate the foundations of human existence. Together, these themes highlight the progression of scientific inquiry and its profound impact on our understanding of the universe and humanity’s place within it.

Some final thought on worldview. Although the term “worldview” has been used widely for over a century, it does not carry a single, standard definition. Therefore, it is important to clarify how I will be using the term in this discussion. In short, I will use “worldview” to refer to “a system of beliefs that are interconnected—like the pieces of a jigsaw puzzle that, when put together, form a larger, cohesive picture. A worldview is not merely a random collection of unrelated beliefs but an intertwined, interrelated system of ideas that work together to shape how we interpret the world around us.”

Before we move forward, let us revisit the three worldviews you have encountered so far and explore how they connect to the broader themes of this topic.